Agentic AI

Agentic AI refers to a class of artificial intelligence systems designed to act autonomously toward defined goals, making decisions, initiating actions, and adapting behavior based on changing conditions and feedback. Unlike traditional AI models that primarily respond to direct inputs with outputs, agentic AI systems operate with a degree of independence, often orchestrating multiple steps, tools, or processes to achieve an objective over time. The term has gained prominence alongside advances in large language models, automation frameworks, and multi-agent systems, where software entities increasingly resemble goal-driven actors rather than passive tools.

The concept of agency in artificial intelligence draws from cognitive science and philosophy, where an “agent” is defined as an entity capable of perceiving its environment, making decisions, and acting upon those decisions. In computing, early forms of agents appeared in rule-based systems and autonomous software programs, but these were typically constrained by rigid logic and limited adaptability. The emergence of modern AI architectures—particularly those combining language models, reinforcement learning, and tool integration—has expanded the practical meaning of agency, enabling systems to plan, reason, and execute tasks across dynamic environments.

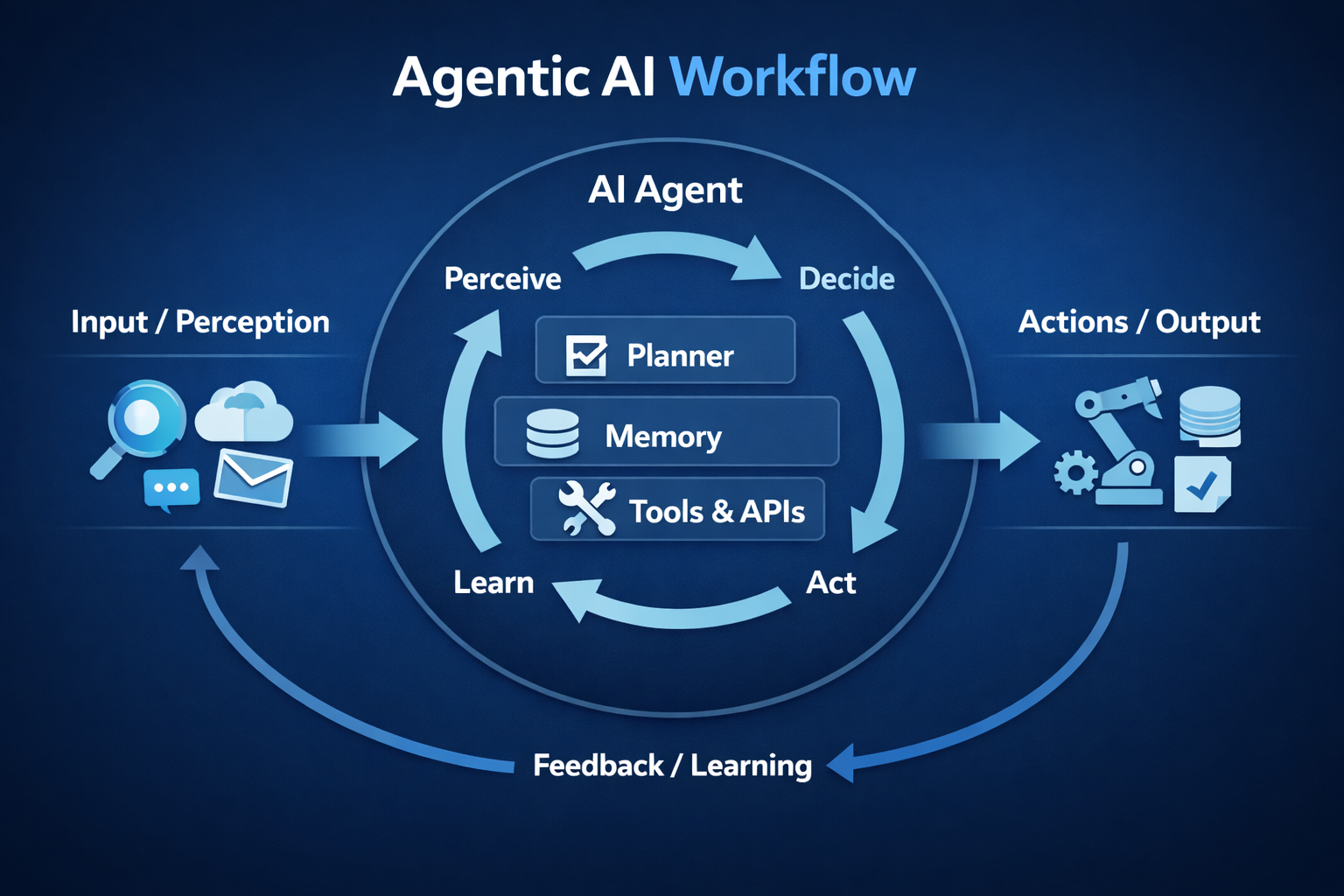

Agentic AI systems are typically characterized by several core properties. They operate with goal orientation, meaning they are designed to pursue specific outcomes rather than simply generate responses. They exhibit autonomy, initiating actions without continuous human prompting. They maintain some form of memory or state, allowing them to track progress and adjust strategies over time. They often integrate with external tools or systems, such as databases, APIs, or software environments, enabling them to extend their capabilities beyond internal computation. Finally, they demonstrate adaptability, refining their behavior based on feedback, constraints, or new information.

A key distinction between agentic AI and conventional AI lies in execution flow. Traditional AI systems, including many machine learning models, function in a request-response pattern: a user provides input, and the system generates output. Agentic AI, by contrast, may decompose a task into multiple sub-steps, determine the sequence of actions required, and execute those actions iteratively. For example, instead of answering a single query, an agentic system might retrieve data, analyze it, generate intermediate outputs, validate results, and adjust its approach before producing a final outcome.

Agentic AI is closely associated with developments in large language models, which provide the reasoning and planning capabilities necessary for complex task execution. When combined with orchestration frameworks, these models can act as central decision-making components within broader systems. Such systems may include task planners, memory modules, and tool interfaces, forming architectures that resemble autonomous workflows. In some implementations, multiple agents collaborate or compete within a shared environment, giving rise to multi-agent systems that simulate coordination, negotiation, or distributed problem-solving.

Applications of agentic AI span a wide range of domains. In enterprise environments, agentic systems are used for workflow automation, handling tasks such as document processing, customer support, and operational decision-making. In software development, they can assist with code generation, debugging, and system monitoring, often interacting directly with development tools. In cybersecurity, agentic AI can support threat detection and response by continuously analyzing signals and initiating defensive actions. Consumer-facing applications include personal assistants capable of managing schedules, performing research, and executing multi-step digital tasks.

The rise of agentic AI also introduces new challenges and considerations. Autonomy increases the complexity of control and oversight, raising questions about accountability, reliability, and safety. Systems that act independently must be designed with safeguards to prevent unintended consequences, particularly when operating in sensitive environments. Transparency becomes more difficult as decision-making processes involve multiple steps and interactions. Additionally, the integration of external tools and data sources introduces security and privacy risks that must be carefully managed.

Another area of concern involves alignment, the degree to which an agent’s actions remain consistent with human intentions and constraints. As agentic systems gain the ability to pursue goals over extended periods, ensuring that those goals are interpreted and executed correctly becomes critical. This has led to increased research into monitoring mechanisms, constraint frameworks, and human-in-the-loop approaches that allow for intervention when necessary.

Agentic AI is often discussed alongside related concepts such as autonomous systems, intelligent agents, and AI orchestration. While these terms overlap, agentic AI emphasizes the combination of autonomy, goal-directed behavior, and multi-step execution within modern AI architectures. It reflects a broader shift in artificial intelligence from static models toward dynamic systems capable of acting within complex environments.

As of the mid-2020s, agentic AI remains an evolving field, with definitions and implementations continuing to develop. Its growing adoption across industries suggests a transition in how software is designed and used, moving from tools that assist users to systems that act on their behalf. This shift has implications not only for technology development but also for organizational structures, workflows, and the broader relationship between humans and intelligent systems.